Securing the building blocks of embedded software

Embedded systems are special purpose systems that cover a wide range of applications, from home electronics and industrial control systems, to medical devices and avionics. The remote management & telemetry features of the so called "Internet of Things" family of embedded devices, have made them quite popular and their placement is almost ubiquitous. From a security standpoint, embedded software is not that different to software found in other domains. However, the criticality of its operation, its exposure on public networks, but also its security limitations make it a very attractive target for attackers. This article presents an overview of the building blocks of today's embedded software, analyses inherent weaknesses in the way this software is built and deployed, and highlights recent developments in the handling of the relevant risk.

CENSUS has been performing security assessments on embedded hardware and software for more than a decade now. From mobile devices and smart medical equipment, to security appliances and cryptocurrency wallets, CENSUS has helped vendors release high quality products through its consulting and assessment services. The insights found in this article come from the experience of our engineers in the embedded world. If you'd like to see more of our work on the subject, have a look at our research publications, public advisories and contributions to major works such as the popular "Practical IoT Hacking" book or ENISA's "Procurement Guidelines for Cybersecurity in Hospitals".

The embedded software stack

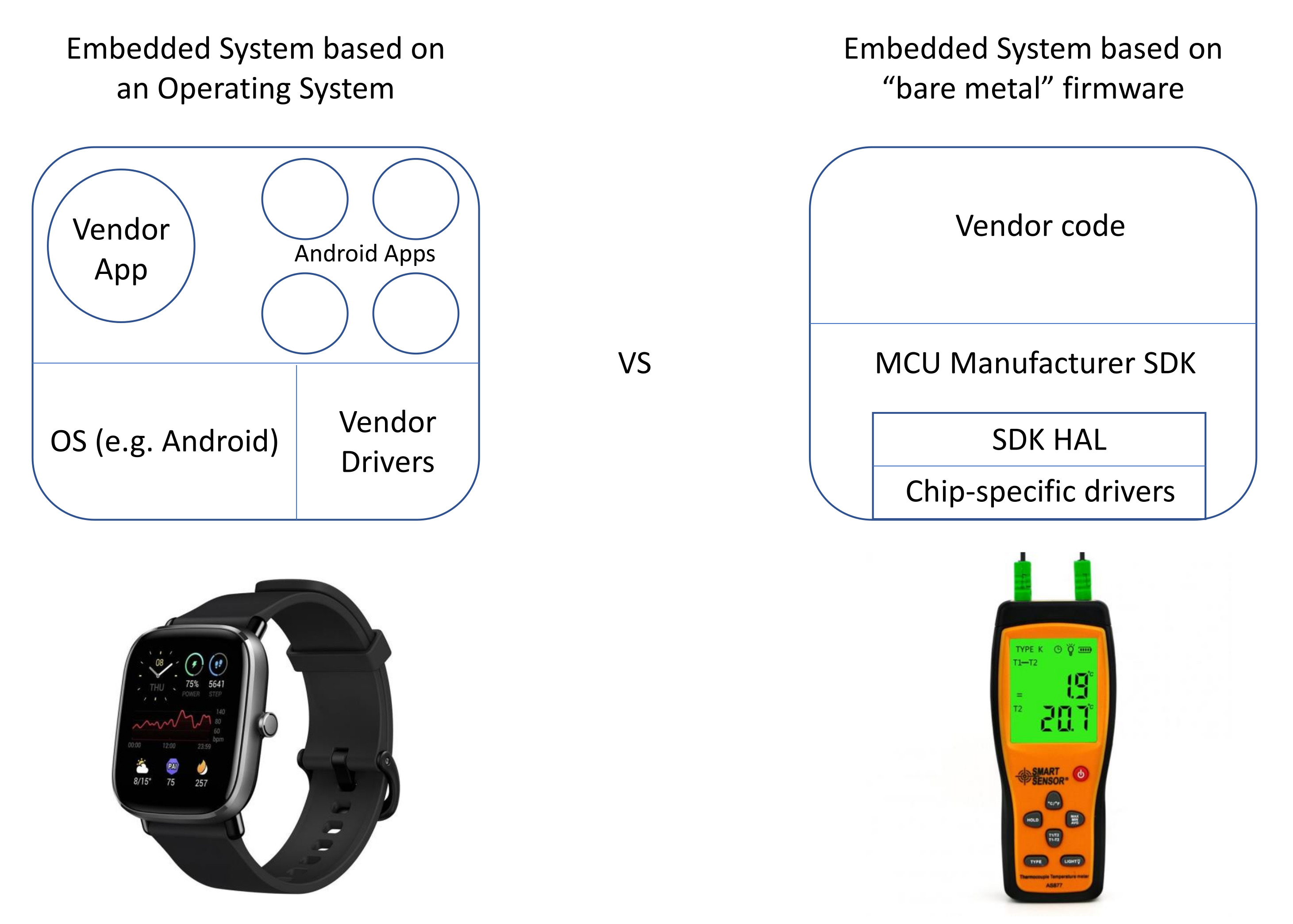

Embedded systems may execute applications over an Operating System (OS) or as part of “bare metal” firmware. Through Operating Systems like Android, it is possible for vendors to ship embedded solutions faster, as they use a standard firmware base, and their main contributions lie on the application development part. Other vendors may wish to augment the Operating System to add support for new hardware / technologies, while at the same time retain compatibility with an ecosystem of applications (such as the ones available on the Google Play Store). In “bare metal” firmware on the other hand, there is no clear distinction between operating system code that drives the hardware and application code. Vendors in these cases develop special purpose software to both drive the hardware and fulfill the desired functionality. This may be the only viable solution when computing on a resource constrained platform. Other firmware that is typically part of an embedded system may be a bootloader, code handling flashing / debugging capabilities, firmware to be executed by other board components beyond the main microcontroller etc.

Figure 1: Components of OS-based and "bare metal" embedded systems

The code that is placed in embedded firmware can be naturally divided into two groups: code that is maintained by the vendor and code that is maintained by third parties. It is very common for embedded developers to build upon a Software Development Kit (SDK) provided by a chip vendor, in order to control chip functionalities from their code. It is also very common for developers to reuse ready-made code (found online or acquired) to fulfill standard tasks, such as reading files off a FAT file system on an SD card. The grouping we mentioned earlier is important; it is much easier for a vendor to control updates on code that was developed in-house rather than code that is maintained by third parties. If third party code is not open source (e.g. delivered as object files) then it's nearly impossible for a vendor to make local modifications to suit product needs.

Much like the firmware itself, embedded SDK's are split into low-level and high-level components. Low-level components implement tasks which may be chip-specific, while commonly exposing an Application Programming Interface (API), known as the Hardware Abstraction Layer (HAL), to higher level components. The HAL abstracts the chip-specific code, and allows developers to work with higher-level components that control hardware of a similar type through a common command interface.

The SDK bundle typically consists of the code needed to perform common chip / firmware operations and a compiler toolchain that builds firmware binaries for the designated target architecture. As the SDK comprises of a large volume of software, it is very possible that this software includes components controlled by third parties, and thus embedded platform SDK releases may be dependent on releases of third party projects.

All of the software dependencies mentioned up to this point, may end up contributing code to the embedded firmware. In the same way, these software projects may also introduce issues to the embedded system.

On firmware updates

As with any type of system, an embedded system may carry security vulnerabilities stemming from design issues, hardware / software implementation issues or default configuration issues. While fixing embedded design and hardware component issues would require for the re-design and replacement of a product, software issues could in theory be addressed more easily through firmware updates. However, it is often the case that these devices provide users with no firmware update capability (or one is provided too late, due to delays imposed by third party suppliers etc.). To push vendors into maintaining / delivering security updates and for better transparency towards customers, the European Union published in 2019 a regulation (see article 55) that requires from ICT product vendors to provide with each certified product, supplementary information on "the period during which security support will be offered to end users, in particular as regards the availability of cybersecurity related updates". However, delayed security updates are a more complex problem to solve. It requires more effort and better communication from the suppliers in a product ecosystem. In February 2021 Linaro, one of the major players in toolchains for embedded systems announced it would be releasing monthly updates to its toolchain. A toolchain, that up to now, had to follow the six month release cycle of ARM. Such a change makes it possible for device vendors to release updates to their firmware sooner, when a toolchain-related security issue has been found.

In addition, embedded devices may perform firmware updates in an insecure manner allowing for man-in-the-middle attacks. To avoid such issues it is best to stick to a standard firmware update implementation if possible (for example, see update mechanisms of AWS FreeRTOS, mender.io etc.) and avoid implementing a custom update method. If a custom developed mechanism is unavoidable, then this mechanism should not be used in production environments without first having passed a security audit.

Sometimes firmware update files are maintained in insecure repositories or through insecure practices, allowing for third parties to introduce malicious code to all devices receiving the update. A suitable example for this is the SolarWinds attack, in which an unauthorized party gained access to public and private organizations through trojanized updates to SolarWinds’ Orion software. Such attacks require defenses at the operational level, touching all points in the supply chain including staff onboarding procedures, code repository access policies, third party provider evaluation and security policy enforcement, code distribution access and integrity controls and many others. NIST in the US has been very active in this space, proposing a Cyber Security Supply Chain Risk Management Program for use by federal agencies, companies, and others.

Embedded devices may also be installed in environments where applying a firmware update is difficult or risky, as is the case in industrial, maritime and other OT environments. In such environments, users are left with devices that carry known security vulnerabilities, that cannot be addressed correctly, and which could potentially be exploited by adversaries. This risk is further amplified when considering the connected nature of some of these devices (e.g. industrial control systems, medical IoT equipment). The "Baseline Security Recommendations for IoT" report by ENISA highlights the importance of network filtering and segmentation (see Security Measure GP-TM-47) as a proactive defense against attacks on vulnerable IoT devices.

On default configuration

The use of common default credentials on embedded devices has plagued Internet infrastructure for decades now, with perhaps the most memorable incident being that of the 2016 Mirai botnet which left much of the internet inaccessible on the U.S. east coast. Devices bearing user (or service) accounts with guessable credentials are easily taken over by attackers, and are used as mechanisms for malware infection, pivot points for further attacks, or as members of botnets.

Similar vulnerabilities can also be found in relaxed default configurations, where unneeded services are exposed to third parties over the Internet (sometimes even without authentication). In section 4.3.3 of the "Baseline Security Requirements for IoT" document, ENISA stresses the importance of Strong default security and privacy measures. All in all, these configuration artifacts can be easily picked up through security auditing efforts (as early as the design phase) and must also be verified at the release engineering level. This is a practice that CENSUS follows in its Secure SDLC services.

On custom code

As firmware may be operating in a constrained environment, it may be the case that its code is developed using low-level programming languages such as C and Assembly, to allow for better performance. Such implementations are often vulnerable to memory corruption issues, where the attacker is able to influence at runtime the firmware's code or data.

Of course, with access to the source code of custom developed components, vendors enjoy a wide array of methods for identifying vulnerabilities, such as code auditing, static analysis, or even the use of semantic search tools such as codeql / ocular / joern / semgrep etc.

It is equally important though, to look for proactive ways to avoid such patterns of vulnerabilities. Recent developments in programming languages have yielded systems programming languages such as Rust, where the compiler provides specific guarantees on the safety properties of the produced software (e.g. memory safety, thread safety etc.).

On third party components

As previously discussed, it is common for embedded device vendors to integrate third party components, both in the software and hardware of a device. These components are usually perceived as trusted "black boxes", as vendors might not want to carry the weight of analyzing another company's product, corroborating with an external party for updates, maintaining local fixes for the component etc. What is interesting is that these components may be carrying out security-sensitive work (such as secure boot, or the cryptographic storage of secrets). Even more so, their exploitation potential might not be obvious to the vendor (e.g. an issue on code controlling a LED display) but might have serious repercussions on the device as a whole (e.g. memory corruption leading to MCU takeover).

It is perhaps for this reason that reusable open source components with permissive licenses have gained such a momentum in the embedded world (with all the usual risk regarding malicious access to the component repository / distribution channels). There, the quality of the component code becomes a responsibility of the project community.

Others, wishing to minimize the related risk employ a zero-trust architecture in their products. A third party component may be considered as "non-trusted" at the design stage and its operation is isolated from the rest of the device. Imagine for example, a bitcoin wallet device that does not depend on any single chip for the generation of random numbers.

Nowadays, there are also steps taken towards the production of hardware components based on an open design. This design can be peer reviewed by the community in a manner similar to the process followed in open source software components.

It becomes apparent that managing the risks imposed by vulnerabilities in third party components requires first an elaborate identification effort: many third party components are bundled in such a way, that it is non-trivial for vendors to identify their primary "ingredients". It is customary for the suppliers of such components to provide versions of these, bundled with other third party components which may have vulnerabilities of their own. Are vendors aware of these "inner" dependencies? The short answer is no. And for this reason, the weight of maintaining a fixed version of the component lies on the supplier. But the supplier may for whatever reason delay the provision of a fixed version. So the vendor is left with a problem; an unknown problem in its product. However, as this problem might be related to a popular third party component, it might be known to an attacker. Therefore, this creates a window of opportunity for an attacker to exploit the flaw on the vendor's vulnerable device.

This is where the "SBOM" ("Software Bill of Materials") comes into play. Recently, the United States government issued an executive order for Improving the Nation's Cybersecurity where in the light of the recent SolarWinds attack, asks from suppliers to provide a Software Bill of Materials to purchasers in the Federal government (or working for the Federal government). The SBOM is a list of the primary components that make up a piece of software (source, component name, version etc.). It is similar in concept to the "BOM" ("Bill of Materials") that device manufacturers typically prepare for hardware compliance purposes. The Home National Telecommunications and Information Administration of the US department of Commerce has issued a whitepaper on common SBOM formats and tools that suppliers can use. With the SBOM registry at hand, a vendor (and a user) may independently track the state of known vulnerabilities in components integrated into a product and take appropriate actions when a vulnerable component is identified.

In response to the executive order, the Linux Foundation posted an article highlighting a number of projects that it fosters, in order to mitigate risks associated with common system building blocks and their supply chain. Among these are the SPDX SBOM standard and the sigstore standard for software component verification.

CENSUS spends a considerable amount of time and effort in identifying and examining third party components in security assessments. For organizations that are interested in performing such an analysis within a product development environment there's a plethora of tools in the market that can help automate the process. These tools perform Software Composition Analysis and some of them also report on identified vulnerable components.

To look for (previously unknown) vulnerabilities in large pieces of code, and especially memory related vulnerabilities, one can use fuzz testing. Fuzz testing (or "fuzzing") is the process of automatically providing extraneous inputs to a piece of software in order to identify program states that are not handled correctly. For projects where fuzz testing might be difficult to apply (due to limitations imposed by fuzzing on the actual device etc.) it is common for security engineers to "slice" specific layers of the program code and fuzz these independently on possibly more powerful hardware. In the past few years there have been a number of research initiatives on the topic of re-hosting firmware on other hardware, as this will allow achieving better code coverage in fuzz testing and other dynamic analysis techniques. One such approach found in the HALucinator paper, abstracts the HAL layer and lower level components of the firmware, so that higher level components can be examined in an automated manner.

But what about the low level components that are provided by chip vendor SDKs? Unfortunately these also contribute vulnerabilities to firmware binaries. In 2019 CENSUS identified a series of NULL pointer dereference issues in newlib, caused by improper handling of memory allocation errors. Newlib is a very popular C library used in embedded systems. The issues found affected also all downstream libraries and SDKs that were based on newlib such as newlib-nano, pico-libc, and most importantly the GNU ARM Embedded Toolchain, found in the development kits of many microcontrollers. These issues would cause the firmware code to access the first few bytes of program memory which in some devices would lead to a crash, while in others could lead to the installation of malicious interrupt handlers. Similar discoveries (in multiple IoT firmware libraries) were also published by Microsoft in 2021 in their "BadAlloc" advisory.

Another set of interesting vulnerabilities were CVE-2019-16129 and CVE-2019-16128, two buffer overflow issues which affected code in the Microchip / Atmel cryptoauthlib SDK; a SDK used to control the company's cryptographic co-processors. CENSUS found that it was possible for someone with physical access to a device, to execute arbitrary code on the main microcontroller, if the device used the key or signature generation features of the cryptographic co-processor.

Closing Notes

In August 18th 2021, just two days after a set of SDK vulnerabilities were disclosed for Realtek SOCs SAM Seamless Network discovered that these were being actively exploited by attackers over the Internet. The pervasive nature and critical deployment of embedded devices calls for strategic planning to counter such cybersecurity threats. Moreover, the complexity arising from the management of cybersecurity issues (sometimes stemming from an ecosystem of different suppliers) makes it clear to embedded device vendors, that cybersecurity issues can be dealt with efficiently only through community efforts.

This article has highlighted recent developments in standards, tooling and techniques that can help build trustworthy products. If you require more information regarding any of the above topics feel free to reach the authors via email.

Any products mentioned or depicted in this article serve only as example material and are the property of their respective owners.